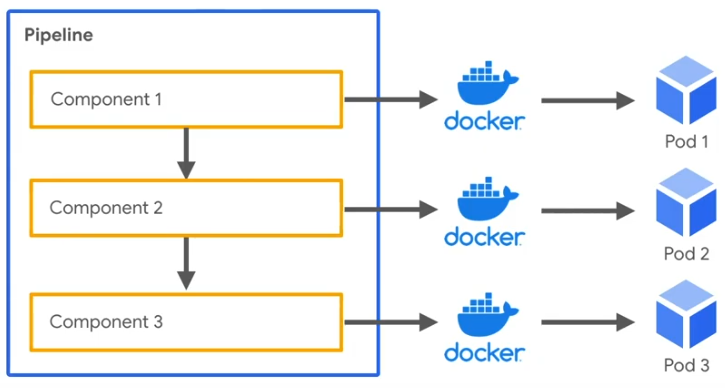

In order to fix the permission here, spin up a pod with persistent volumes attached and run it once. Since airflow pods run as non root users, they would not have write access on the nfs server volumes. Follow these steps: Next, execute the following command to deploy Apache Airflow and to get your DAG files from a Git Repository at deployment time. terraform destroy -var-file terraform.tfvars. So please make sure you destroy everything after your study. If you let this infrastructure up and running you will be billed by the time it was kept up. The first step is to deploy Apache Airflow on your Kubernetes cluster using Bitnamis Helm chart. Airflow Kubernetes Official Documentation Configure Airflow Git-sync using Helm Chart Attention. Change owner and permission manually on disks Step 1: Deploy Apache Airflow and load DAG files. Code Samples for PVC for Airflow LogsĬreate Persistent Volumes and Persistent Volume claims with the below command. Provision NFS backed PVC for Airflow DAGs and Airflow Logs Code Samples for PVC for Airflow DAGsĬreate Persistent Volumes and Persistent Volume claims with the below command. You can view the same using the kubectl command kubectl get storageclass -n nfs-provisioner. This will create a new StorageClass with nfs-subdir-external-provisioner. name: AIRFLOW KUBERNETES NAMESPACE value: airflow-k8sexecutor. The namespace where to run our worker pods. This article is offering a couple of scripts that can help someone with the same proposition.

name: AIRFLOW CORE EXECUTOR value: KubernetesExecutor. Airflow launched a new version (2.0.0) with news features, so it’s time to initiate tests before deploying it in production. Replace the NFS_HOSTNAME_OR_IP with your NFS Server value and run the commands. We need to set: The executor we want to use with Airflow to KubernetesExecutor. It is recommended to use nfs-subdir-external-provisioner helm charts for this case. To provision PersistentVolume dynamically using the StorageClass, you need to install the NFS provisioner. This guide assumes you have NFS Server already setup with Hostname or IP Address which is reachable from your on premises Kubernetes cluster and you have configured a path to be used for OpenMetadata Airflow Helm Dependency.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed